Q-Learning IQ Tester

I came across the Original IQ Tester—a triangular puzzle with 14 pins and one empty hole. I couldn’t consistently finish with a single peg left, so I asked: can a reinforcement learning agent learn to solve it better than I can? This project is my answer.

Skills & technologies

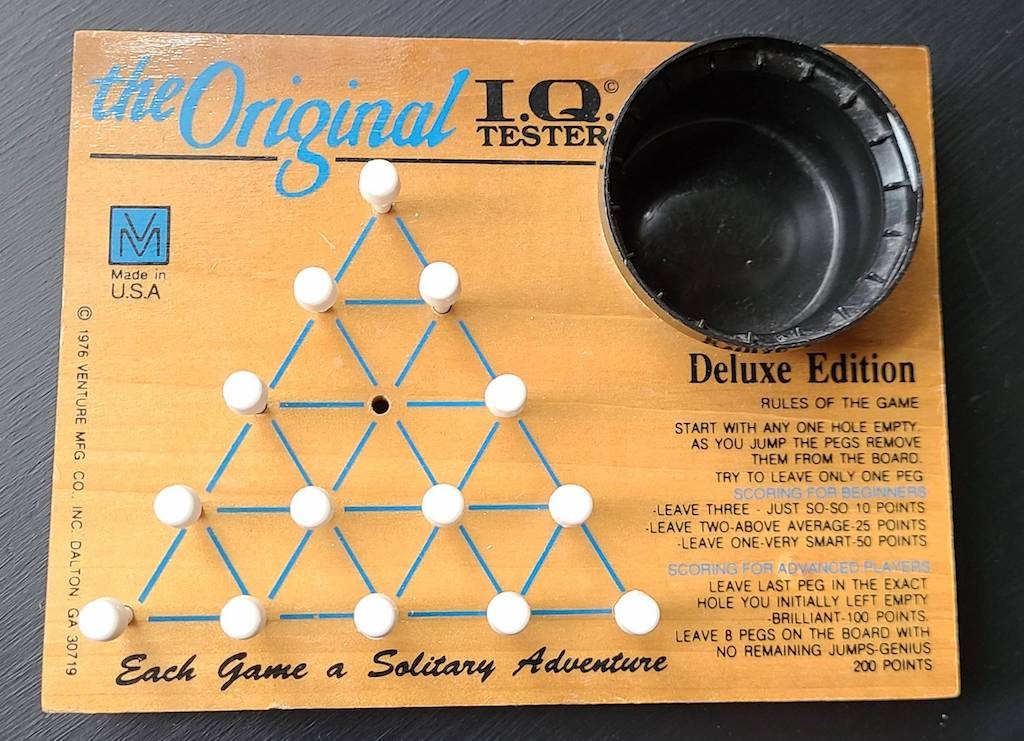

The puzzle

The Original IQ Tester is a 5-base pyramid with 14 pins and 1 empty hole. You jump one peg over another into the empty space (the jumped peg is removed) and repeat until no legal moves remain. The goal is to finish with exactly one peg. The mechanics are simple; the puzzle is surprisingly hard. It’s deterministic, sequential, and reward-sparse—a good testbed for reinforcement learning.

What I built

- Q_Learning_IQ_tester.py — Environment (state as NumPy array, 38 legal moves), reward function with shaping, Q-learning with epsilon-greedy exploration, temporal-difference updates, training over 10k episodes per start hole, and evaluation over 100 greedy rollouts.

- peg_gui.py — Tkinter GUI that loads saved Q-tables and lets you step through the greedy solution or auto-play, and reset the board.

- Reward shaping: small positive reward per jump (+0.25), +20 for ending with one peg, penalty for extra pegs left, so the agent gets a useful learning signal even before solving.

- Symmetry: the board is symmetric under rotation/reflection. I only train on start holes {0, 1, 3, 4} (all symmetry classes), so training is 4× faster and the Q-table stays smaller.

Results

Greedy rollouts show the agent learns real strategy: it tends to remove outer pins early, keeps symmetric shapes when it can, and avoids leaving isolated pins. Action sequences get longer and more structured as training goes on. The GUI makes that visible. Quantitatively, the agent sometimes reaches the optimal one-peg solution; more often it ends with two to four pegs—still much better than random. Success rate depends on the starting hole; after ~10k episodes per start, gains level off. In most runs the agent finds a “winning” policy from every hole; if not, a couple of runs usually does it.

Limitations & future work

Reward is still sparse (most signal is at the end), and tabular Q-learning doesn’t generalize to unseen states, which hurts in a large state space. Some good move sequences are rare, so exploration is tough. I’d like to try a Deep Q-Network for generalization, more reward shaping or curriculum learning, symmetry-aware state compression, and better ways to propagate terminal reward (e.g. Monte Carlo returns). Even with these limits, the project shows that Q-learning can learn non-trivial policies for the IQ Tester, and the visualization was really helpful for understanding what the agent was doing.

Run it yourself

From the repo:

pip3 install -r requirements.txt python3 Q_Learning_IQ_tester.py python3 peg_gui.py

The CLI will ask for a start hole in {0, 1, 3, 4}. The GUI has Step (next action), Auto-play (greedy solution), and Reset.